Inside Neuroscience: New Brain-Computer Interfaces can Advance Quality of Life

When injury or disease damages the nervous system, the resulting disabilities can severely impact productivity, independence, and other aspects of daily functioning. Paralysis, for example, affects approximately 5.5 million people (i.e., one in 60 individuals) in the United States alone. Most efforts aimed at helping those living with these disabilities have focused on how to adapt to their new circumstances. Today, researchers are working toward restoring an individual’s productivity and independence with increasingly high levels of fine-motor control.

Brain-computer interfaces (BCIs), technologies that link the brain directly to external devices like prosthetics, aim to restore function in people with movement or sensory disabilities by bypassing the injury site. At the “AI Helping Hands and Eyes” press conference at Neuroscience 2018, researchers presented cutting-edge advances that have the potential to restore or even replace motor and sensory function.

“I think we’re in a particularly exciting time,” said press conference moderator Chethan Pandarinath, assistant professor at the Systems Neural Engineering Lab at Georgia Tech. “Our ability to interface with the brain is rapidly improving due to advances in neural interfacing technology. In parallel, there are advances in computer science and artificial intelligence that are giving us new tools to understand the complex activity of the nervous system.”

Neuroprosthetics Restore Hand Movement After Paralysis

To regain independence and daily functioning, those suffering from paralysis emphasize a need to access motor function in their hands. For a participant in a pilot study by Battelle Memorial Institute and the Ohio State University, a BCI was implanted into his brain to record neural activity.

The participant was asked to envision a movement through thought, which was decoded using machine learning technology. Then, a sleeve on the paralyzed arm stimulated muscles to perform the activity. In essence, the brain is directly connected to the arm — and the participant was able to use the BCI to make these envisioned movements.

Gaurav Sharma, lead author and researcher at Battelle, said, “We are not done yet. Our goal is basically to take this technology out of the lab to the home of people who need it.” With this in mind, Sharma’s team has miniaturized the technology so that it is wearable, portable, and easier to use.

Avatar Helps Stroke Victims Regain Movement

Stroke victims suffer from lack of assistance after post-acute care when they return home and have continued lack of motor function. Researchers at g.tec medical engineering in Austria have developed a BCI system, called recoveriX, that combines a computer-based avatar with brain activity and electronic feedback. The system enables the individual to imagine an arm movement, see the movement in the avatar, and stimulate the arm. The patient can both feel and learn the movement simultaneously.

In addition to the system using the sensory cortex, “we are also using mirror neurons. The patient is seeing the avatar movement in front of him — mirror neurons are responsible for copying behavior,” explained corresponding author Christoph Guger. “This leads to more activation [of the brain].”

Patients go to clinics 25 times for 45 minutes each visit and perform 5,000 action repetitions. At present, g.tec has 50 installations from Japan to Hawaii where people are treated regularly — including 2,000 treatments in Austria alone. Thus far, all treated patients showed improvement in motor functioning.

Prosthetic Arms That Communicate Sensory Information

Today’s prosthetic arms still cannot convey critical information like contact force, object dimension, and pressure, which means a user has to rely heavily on sight to gauge efficacy. Researchers at Florida International University are working to fill this gap so that sensory feedback can allow the user to “feel” objects and improve their daily functioning.

The Neural Enabled Prosthetic Hand System stimulates sensory fibers using wires implanted in nerves in the arm. Sensors embedded in the prosthetic hand send information back to these implanted electrodes, allowing the person to receive sensations. One patient is using this system at home regularly, and five more are being recruited.

In the long run, the technology has the potential to go beyond people who have experienced an amputation. Lead author Ranu Jung explained, “This implanted technology could actually be utilized in the context of bioelectronic medicine. You could target different nerves by directly stimulating them and…influence other organs and their function.”

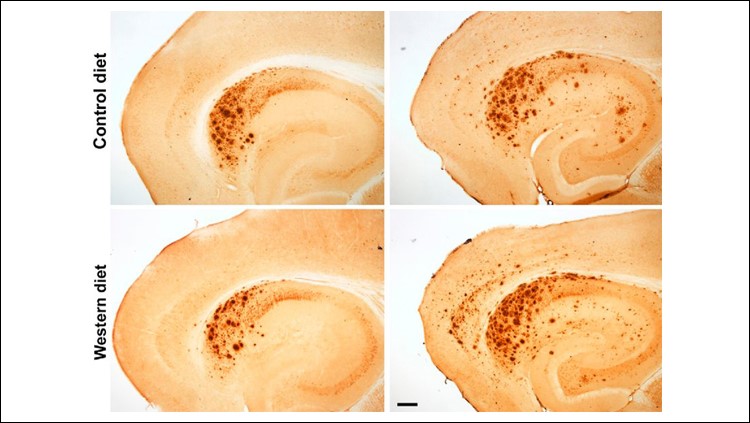

Drawing a “Picture” in the Brain for Blind Patients

Even in individuals who have lost the function of their sight, the sight-processing part of the brain is often intact. A shock to that part of the brain can create a burst of light that researchers have speculated may be useful for projecting a simple image directly to the brain.

Researchers at the Baylor College of Medicine used a visual cortical prosthesis (VCP) to transmit information from a wearable camera using electrodes to stimulate the brain. In much the same way that one might trace a letter, “instead of tracing on the patient’s palm, we trace it directly on their brain using electrical current,” said lead author Michael Beauchamp.

A grid of electrodes implanted in the visual cortex “draws” the image by activating each electrode in sequence. Previous models showed the image all at once, which confused the patient as to the dimension and shape of the object. Using this new method, patients were then able to replicate the shape that had been drawn — meaning they could not only identify it, but draw it out as well.

A Multi-Purpose, Wearable Device to Process the Visual World

Single purpose devices can help people who are blind with individual tasks like navigation, translation of written information, or finding the location of an item. Researchers at Brown University are developing an “intelligent visual prosthesis” to help individuals regain independence and productivity by addressing a number of tasks simultaneously.

Using data from two cameras and an inertial measurement unit mounted on a pair of glasses, the device’s belt-mounted wearable computer uses computer vision and machine learning to continuously process visual cues in the user’s surroundings. The user can choose what kind of information they wish to receive from the device. For example, asking “where are my keys?” causes the device to find the keys by feeding the camera images through an artificial neural network, then play sounds that guide the user to the keys’ location.

Michael Paradiso, corresponding author, said, “Right now we’re trying to optimize the system so [individuals] can do daily tasks like navigate complex environments or pick up things around the house. What we’d like to do is eventually have a hybrid system where you have some sort of visual experience that’s then paired with very precise information coming through sound and the device speaking to you.”

What’s Next for BCIs

Movement disabilities and visual impairment from stroke, injury, and other causes are still pervasive challenges for millions of people. This research continues to move the needle to improve their lives, with the goal of bringing back function that had been previously lost. Understanding the brain will continue to help this area flourish.

“What’s particularly impressive here is — these aren’t just ideas,” explained Pandarinath. “The researchers have shown the potential of these ideas by working directly with people with disabilities.”